Why I Failed to Build a Lego-Style Coding Agent

Kubernetes Ingress-NGINX maintainer | Microsoft MVP | CNCF Ambassador

I wanted it simple. I made it simple. Then I discovered that making it actually useful meant adding feature after feature. What started as building blocks became an entire castle.

The Beginning: A Simple Idea

On November 30, 2025, I made my first commit: amcp agent init. In the README, I described it like this:

A Lego-style coding agent CLI with built-in tools (grep, read files, bash execution) and MCP server integration for extended capabilities. Lego-style—that was my north star. I envisioned a coding agent that worked like Lego bricks:

Minimal core: Just

grep,read_file, andbash—the essentialsComposable: Extend capabilities through the MCP protocol

Lightweight: Only 2,482 lines of Python

Few dependencies: Just

typer,rich,pydantic,mcp, andopenaiAnd I succeeded. The initial AMCP was a clean, focused CLI tool:

src/amcp/

├── agent.py # 620 lines - Main agent loop

├── tools.py # 511 lines - Tool definitions

├── chat.py # 579 lines - Conversation handling

├── cli.py # 265 lines - CLI entry point

├── config.py # 169 lines - Configuration loading

├── mcp_client.py # 102 lines - MCP integration

└── readfile.py # 47 lines - File reading

Simple. Beautiful. Complete. Or so I thought.

Turning Point #1: The Context Window Explodes

Two weeks after launch (December 14, 2025), I hit my first real problem: context window overflow. When an agent works on complex tasks, conversation history grows indefinitely. My initial solution was brutal—just keep the last 20 messages. But that meant the agent would "forget" critical context from earlier in the session. I had no choice but to add compaction.py (+155 lines):

# 467d72b: feat: add context compaction

class Compactor:

"""Intelligently compress conversation history while preserving key information."""

This was the first "mandatory brick." Without it, the agent couldn't complete complex, multi-step tasks.

Turning Point #2: Not Everyone Uses OpenAI

Two days later (December 16, 2025), reality knocked again: not everyone uses OpenAI.

a9455d5: feat: add support for ACP

fb6b08c: add anthropic and open_response LLM format support

This added 2,709 lines of code:

acp_agent.py: 752 lines (Agent Client Protocol support)Anthropic Claude integration

OpenAI Responses API format support What started as a simple

openai.chat.completions.create()call was now a multi-headed abstraction layer. Different APIs, different formats, different quirks—all needing adaptation.

Turning Point #3: One Agent Isn't Enough

On Christmas Day 2025, I realized that complex tasks need multiple agents working together:

e61bba1: add multiple agents

5e58fc3: add TaskTool and EventBus

This added 2,246 lines of code:

multi_agent.py: 375 linesmessage_queue.py: 531 linesevent_bus.py(eventually grew to 635 lines)task.py(now 859 lines) The jump from single-agent to multi-agent was a qualitative shift. My Lego bricks were becoming a castle.

Turning Point #4: Users Need Extensibility

Between December 28, 2025 and January 2, 2026, I added two extensibility systems:

d746a8c: feat: add Hooks system for extensible agent behavior (v0.5.0)

17eeeb3: feat: add skills and commands system for agent extensibility

The Hooks system (+886 lines) lets users inject custom logic before and after tool execution. The Skills and Commands system (+836 lines) enables dynamic capability loading. I initially thought these were "nice to have." But when I started actually using AMCP for real work:

Dangerous operations needed validation → PreToolUse hooks

Everyone on the team had their own workflows → Commands

Different projects needed different expertise → Skills Features I thought were optional turned out to be essential.

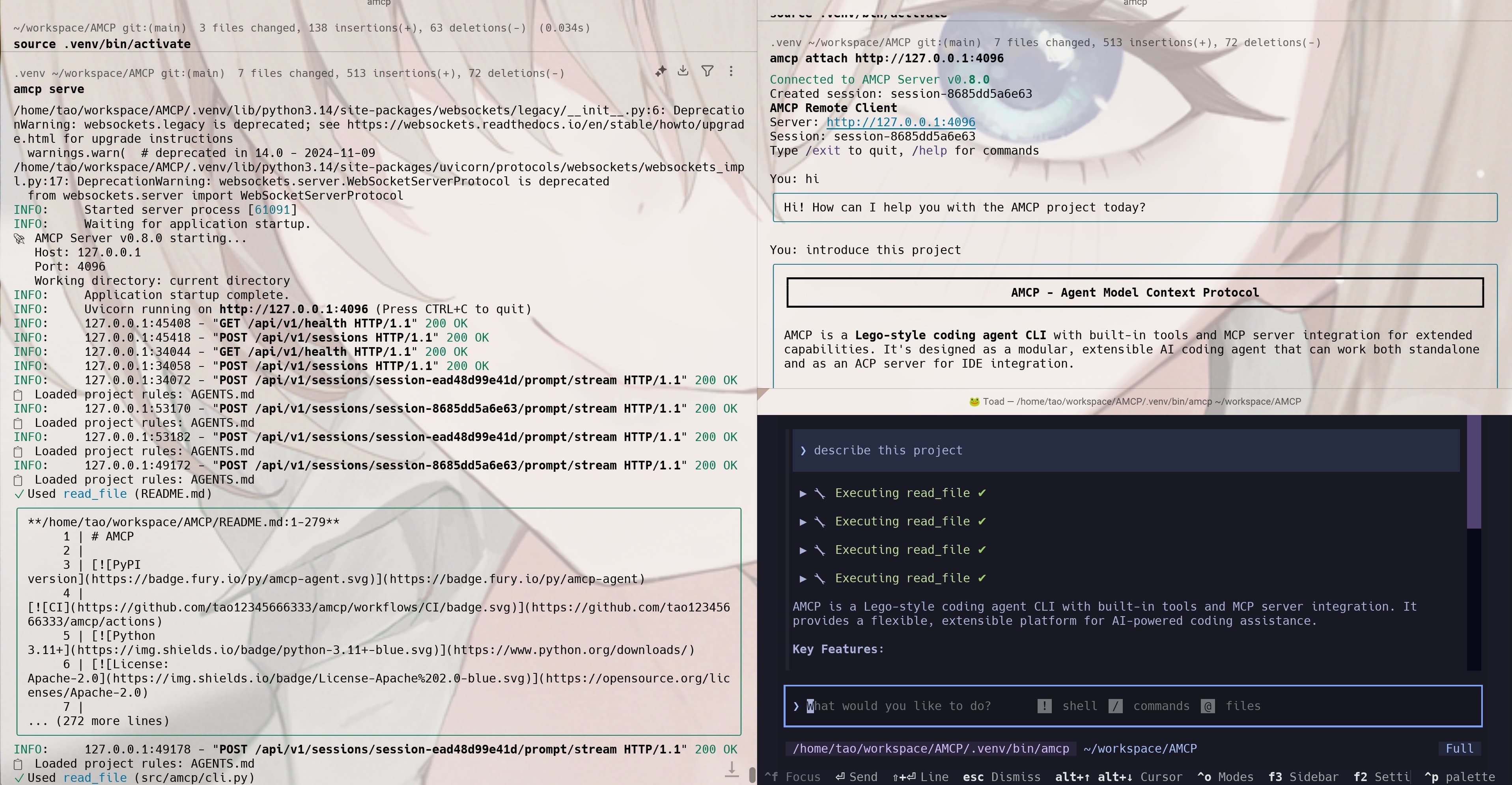

Turning Point #5: Production Needs a Server

January 7-9, 2026 brought the biggest refactor:

7f0ac35: feat: init C/S architecture

52c93c2: feat(server): complete Phase 2 - streaming & events

af7ce69: feat: complete Phase 3 - CLI Client SDK

f923591: feat: protocol and sessions

This added 3,266+ lines of code:

HTTP/WebSocket server

Session management

Event broadcasting system

Multi-client support

Protocol adaptation layer Why? Because:

IDE integration requires persistent connections

Multiple clients need shared sessions

Real-time streaming requires WebSocket

Enterprise deployment requires a service architecture

The Numbers Tell the Story

Here's a before-and-after comparison:

| Metric | v0.1.0 (Initial) | v0.8.0 (Current) |

| Lines of Code | 2,482 | 20,176 (8x growth) |

| Python Files | 8 | 53 (6.6x growth) |

| Dependencies | 7 | 14+ (2x growth) |

| Directory Depth | 1 level | 4 levels (added server/, client/, protocol/, prompts/) |

| Development Time | — | 40 days |

| Commits | 1 | 58 |

Growth Timeline

2025-11-30 ████ Initial version (2.5K lines)

2025-12-14 █████ + Context Compaction

2025-12-16 ████████ + ACP + Multi-LLM Support

2025-12-25 ████████████ + Multi-Agent + EventBus

2025-12-28 ██████████████ + Hooks System

2026-01-02 ████████████████ + Skills & Commands

2026-01-07 ████████████████████ + C/S Architecture

2026-01-09 █████████████████████████ Current version (20K+ lines)

Why "Simple" Doesn't Last

Looking back at 40 days of development, I've identified four reasons why simplicity was impossible to maintain:

1. Reality Is More Complex Than Your Imagination

I initially thought read_file + grep + bash would be enough. Reality disagreed:

Large files need chunked reading → smart readfile modes

Large-scale edits are error-prone → apply_patch tool

Complex refactoring needs planning → todo tool

Dangerous operations need confirmation → permission system

2. User Needs Are Incremental

At first, I was the only user. Then others started using it:

"Can you support Claude?" → Multi-LLM support

"Can I use this in Zed?" → ACP protocol

"Can multiple agents collaborate?" → Multi-Agent architecture

"Can I customize workflows?" → Skills & Commands

"Can I deploy this as a service?" → C/S architecture Every request was reasonable. Every feature became necessary.

3. Production Requires Robustness

Toys can be simple. Production systems must:

Handle edge cases

Manage resource lifecycles

Support concurrent access

Provide monitoring and debugging

Guarantee type safety These "non-functional requirements" often require more code than the features themselves.

4. Composability Requires Infrastructure

Here's the irony: to achieve true "Lego-style" composability, you need:

A unified tool interface →

BaseToolabstractionA message passing mechanism →

EventBusLifecycle hooks →

HookssystemDynamic loading →

SkillssystemConfiguration management → Complex config layer The infrastructure for composability is itself a source of complexity.

What I Learned

1. "Lego-Style" Is a Philosophy, Not a Destination

Lego bricks look simple. But Lego the company has thousands of different parts, strict quality standards, and a sophisticated design system behind those "simple" blocks. AMCP is still "Lego-style"—its design still prioritizes composability. But achieving composability requires significant complexity.

2. Complexity Is Necessary, But Must Be Managed

The codebase grew 8x, but if you look closely, complexity is distributed:

Core agent logic remains relatively simple

Complexity is encapsulated in individual modules

Modules communicate through clean interfaces Complexity is inevitable, but it can be isolated.

3. Incremental Evolution Is the Right Path

If I had tried to design a 20,000-line system from day one, I would have:

Built features nobody actually needed

Optimized the wrong things too early

Lost the ability to iterate quickly By starting simple and solving only "the most painful problem right now," AMCP evolved into something actually useful.

Conclusion: Did I Really "Fail"?

So, did I actually fail? If the goal was to stay at 2,500 lines of code—yes, I failed spectacularly. But if the goal was to build a coding agent that actually works in production—then I succeeded. The price of success was accepting that complexity is unavoidable. "Lego-style" isn't about simplicity. It's about the right abstractions, clear boundaries, and composable design. AMCP still has those.

Written on January 10, 2026AMCP v0.8.0 | 58 commits | 20,176 lines of code